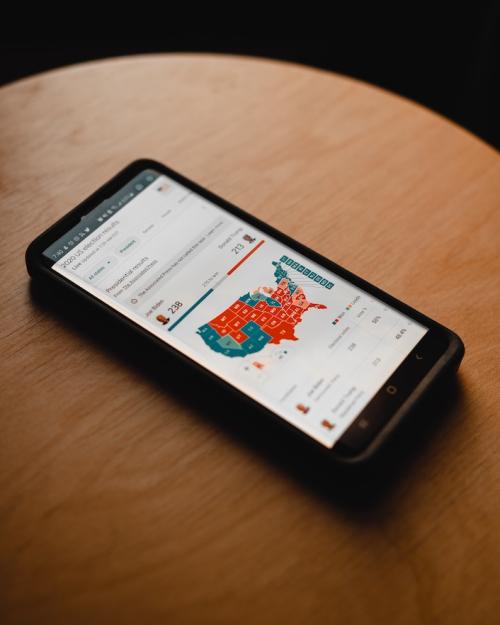

Starting in November, Google will require political ads to label the use of artificial intelligence. Sarah Kreps, professor of government in the College of Arts & Sciences and director of the Tech Policy Institute at Cornell University, has studied how generative AI threatens to undermine trust in democracies when misused.

“With AI-generated content increasingly flooding the internet, people will not know what to believe,” says Kreps. “And if they don't know what to believe, they might just not believe or trust anything. That erosion of trust is particularly dangerous in a political context. Nowhere is this issue more pressing than with the 2024 election cycle.

“Google's decision to require the disclosure of AI in political ads gestures toward the type of transparency and disclosure measures that research finds can backstop trust toward AI and those who use it. The requirement is also relatively costless and should be a model that other social media and news platforms will follow. It might not solve the problem of inauthentic political content online in general but will help in the specific setting of political ads. It may also put political campaigns on notice that there are some limits to the liberties they can take with these new technologies.”

For interviews contact Becka Bowyer, cell: (607) 220-4185, rpb224@cornell.edu.